Member-only story

RAG vs. Long-Context LLMs: A Comprehensive Study with a Cost-Effective Hybrid Approach

✨#QuickRead TL;DR

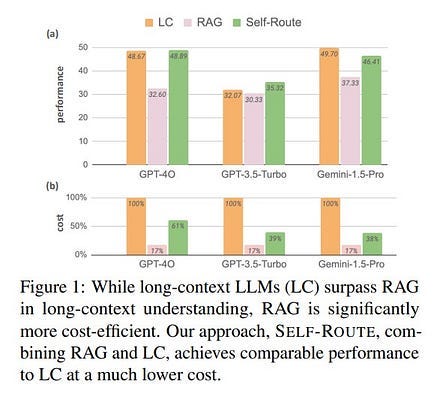

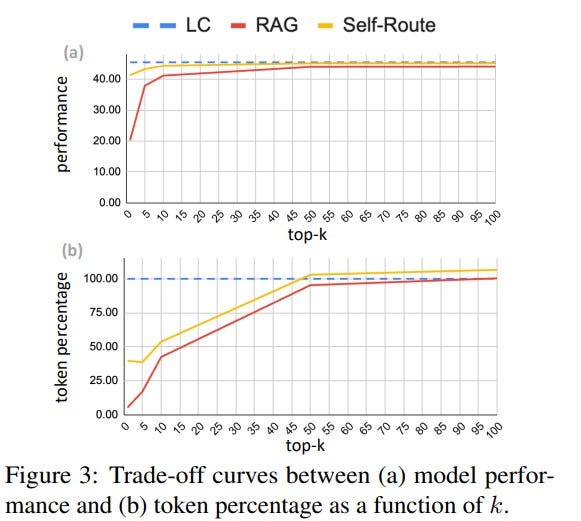

✨Research compares the effectiveness and efficiency of Retrieval Augmented Generation (RAG) versus Long-Context (LC) capabilities in modern Large Language Models (LLMs). The authors propose a hybrid approach, termed #SELF_ROUTE, to combine the strengths of both methods, aiming to reduce costs while maintaining high performance.

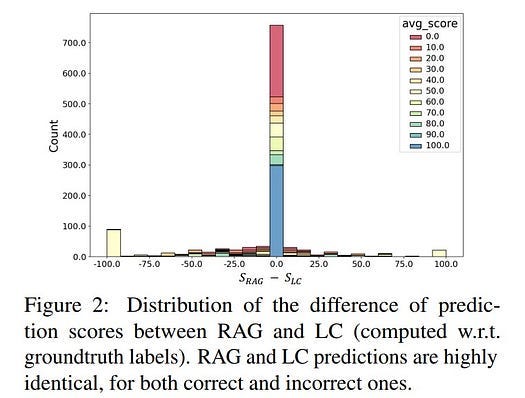

✨#Research investigates the growing capabilities of LLMs to process LCs directly, as seen in models like GPT-4 and Gemini-1.5, which challenge the need for RAG techniques. RAG traditionally supplements LLMs by retrieving relevant information from external sources to help answer queries, thereby reducing the computational burden associated with processing extensive contexts. Authors conduct a benchmarking of both RAG and LC models across multiple datasets to identify their respective strengths and weaknesses.

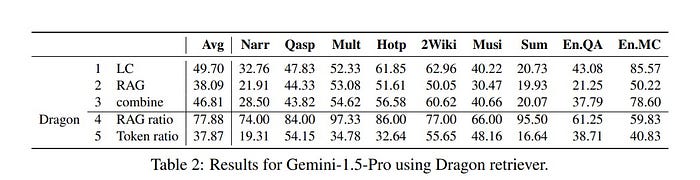

✨Research #method employs a comparative benchmarking approach across various datasets sourced from #LongBench and #∞Bench, focusing on English, real-world, and query-based tasks. The datasets include a mix of synthetic and real texts, with contexts varying from 7k to 100k tokens in length. Three modern LLMs are tested: #Gemini-1.5-Pro, #GPT-4O, and #GPT-3.5-Turbo. Two #retrievers, #Contriever and #Dragon, are used to evaluate RAG’s performance. The evaluation metrics include #F1 scores for open-ended QA tasks, accuracy for multi-choice QA tasks, and #ROUGE scores for summarization tasks.

✨The #results reveal that LC models consistently outperform RAG across most datasets and LLMs, especially…