Member-only story

Inside the Minds of LLMs: Learning vs Retrieval: Unveiling the Balance Between Learning and Knowledge Retrieval

✨✨ #QuickRead tl;dr✨✨

✨✨ Research Overview:

Researchers investigate the in-context learning (ICL) mechanism in large language models (LLMs), focusing on regression tasks. Research proposes a hypothesis that ICL lies on a spectrum between knowledge retrieval and learning from in-context examples. The study provides a framework for evaluating how LLMs balance these two mechanisms based on factors such as the richness of in-context examples and task-specific knowledge.

✨✨ Key Contributions:

- Research introduces a hypothesis that ICL operates on a spectrum between learning from in-context examples and retrieving internal knowledge. This reconciles competing theories of ICL being solely meta-learning or knowledge retrieval.

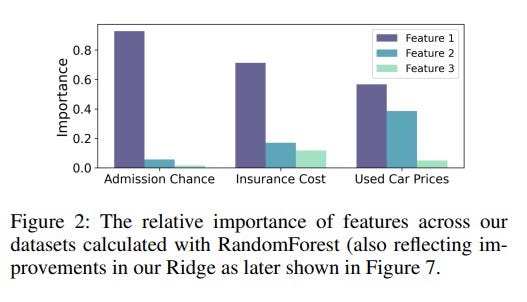

- Authors develop a framework to systematically assess the performance of LLMs on regression tasks, examining how different factors like the number of in-context examples and the number of features influence the balance between learning and retrieval.

- Research demonstrates that LLMs are capable of performing regression on real-world datasets, extending previous research focused on synthetic data.

- Research provides insights into how prompts can be engineered to control whether LLMs lean more towards knowledge retrieval or learning from examples, thus offering practical tools for optimizing LLM performance.

✨✨ Methods:

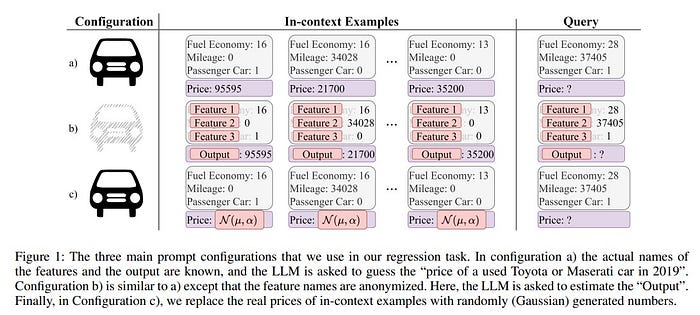

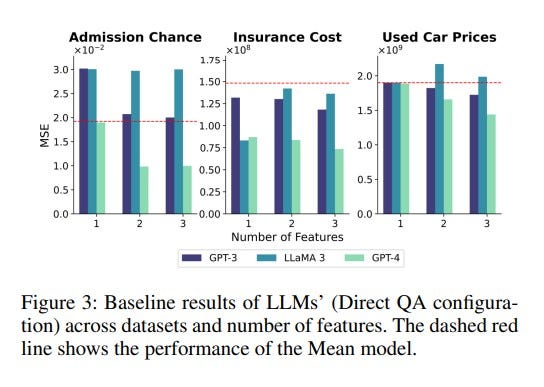

Authors test their hypothesis using three LLMs (GPT-3.5, GPT-4, and LLaMA-3) on multiple datasets representing real-world regression tasks. Also designed experiments with different prompt configurations, including:

- Named Features, Revealing actual feature names and asking the LLM to estimate outputs.

- Anonymized Features, Hiding feature names and relying on numeric values.

- Randomized…